Organizations collect thousands of open-ended survey comments every year, but turning that feedback into insights has traditionally taken weeks of manual work. AI sentiment analysis for surveys changes that by using natural language processing to analyze tone, themes, and patterns across large volumes of feedback in hours instead of weeks.

I learned a lesson about “sentiment” the hard way— by moving from Scotland to Texas.

Back home, if you say you were “pished” last night, most folk hear “a bit drunk.” In the U.S., there’s a very similar-sounding word people use that means something closer to angry. Same vibe. Completely different meaning. And that tiny semantic mismatch is exactly how sentiment models can get tripped up in survey comments — confident labels, wrong interpretation.

My favorite real-world example comes from Angry Scotsman Brewing, where the head brewer’s nickname stuck after he used “pished” the Scottish way — and his American roommates heard something else entirely. Small language differences like that matter more than most dashboards admit.

So what does all of that have to do with AI sentiment analysis? Well, that tiny semantic gap captures the core idea: Survey comments are rich, local, and contextual — and the way we analyze them has changed dramatically with AI.

Jump to a Section

- What Is AI Sentiment Analysis for Surveys?

- Early AI Wasn’t Really AI (and It Often Lied With Confidence)

- What Modern AI Changed: Sentiment Became Contextual — and Operational

- Why This Matters for Multifamily, Commercial, and Employee Surveys

- How To Use AI Sentiment Analysis in Surveys: A Practical Playbook

- Key Takeaway

- FAQs About AI Sentiment Analysis for Surveys

What Is AI Sentiment Analysis for Surveys?

AI sentiment analysis for surveys uses natural language processing (NLP) and machine learning to automatically identify themes, intent, and emotional tone in open-ended survey responses. Instead of manually reading and coding hundreds or thousands of comments, AI analyzes the language in each response to classify sentiment (e.g., positive, neutral, or negative) and to surface patterns across large datasets.

For organizations collecting resident or employee feedback, this approach dramatically reduces the time required to analyze qualitative responses while making it easier to detect emerging issues, recurring themes, and shifts in sentiment at scale.

But while AI can analyze survey feedback far faster than traditional manual coding, the quality of those insights depends on how well the system understands real human language — including dialect, context, and nuance.

Before AI: The “Qualitative Grind”

Open-ended survey responses have always contained some of the most useful insights in resident and employee feedback. The challenge wasn’t their value — it was the amount of human effort required to analyze them. For years, turning raw comments into actionable insights meant a slow, methodical workflow that looked something like this:

- Export comments into a spreadsheet.

- Build a codebook covering themes like maintenance, cleanliness, communication, safety, leadership, workload, etc.

- Hand-code responses into themes (often more than one theme per comment).

- Quality control by double-coding and alignment to keep coders consistent.

- Summarize themes with quotes + counts for reporting.

This is classic content/thematic analysis, and it works — but it is inherently labor-intensive and time-bound. Even modern training materials for qualitative analysis still explicitly list manual analysis and transcript-based coding as options, alongside software-assisted approaches.

There’s also a formal “quality” side to this. When multiple humans code text, you typically need intercoder reliability practices to reduce subjectivity and drift.

And this isn’t new. Long before today’s AI wave, public-sector evaluators were already using content analysis to structure and interpret written, open-ended material — because that’s what you had to do if you wanted a signal from free text.

The Practical Problem

If you run multifamily, commercial/tenant, or employee survey programs across many locations or teams, manual coding doesn’t just cost time — it creates a delay that reduces trust:

- The feedback moment passes.

- Leaders get insights late.

- Action plans become generic because the nuance gets lost in summarization.

So naturally, the industry started looking for ways to automate the process. The first generation of so-called AI tools promised exactly that, but what they delivered was something much less intelligent.

Early AI Wasn’t Really AI (and It Often Lied With Confidence)

Early attempts at AI sentiment analysis for surveys relied on simple rules and word scoring rather than true language understanding. These systems could process text quickly, but they often produced misleading results.

Before modern NLP became widely available, many teams tried to automate sentiment analysis using relatively simple techniques, such as:

- Keyword counts or word clouds – highlighting frequently used words without understanding meaning.

- Simple rules – logic triggers like, “if comment contains ‘rude’ → negative sentiment.”

- Lexicon scoring – assigning positive or negative values to individual words.

Lexicon-based sentiment analysis became popular because it was fast and easy to implement. A well-known example is VADER (2014), a rule-based model designed to score sentiment, particularly in short informal text.

The problem is that lexicons analyze words, not meaning, and real survey comments rarely behave that simply.

In reality, survey comments often include language patterns that lexicon models struggle with:

- Mixed sentiment: “Maintenance is quick, but communication is terrible.”

- Negation: “The experience was not bad.”

- Dialect and local language: Words like “pished,” “mad,” “sick,” “brilliant,” or “wee” can mean very different things depending on context.

- Industry-specific phrases: Phrases like “make-ready,” “CAM charges,” “work orders,” “turns,” or “portfolio” carry meaning that generic models may miss.

In other words, these early systems looked automated, but they often misunderstood the very feedback they were meant to analyze.

Which brings us to the modern shift.

What Modern AI Changed: Sentiment Became Contextual — and Operational

The big leap in AI sentiment analysis came from transformer-based models — the family popularized by BERT (Bidirectional Encoder Representations from Transformers). Unlike earlier systems that relied on static word lists, these models learn meaning from context, allowing them to interpret phrases, relationships between words, and nuance within sentences.

In practical survey terms, this means modern AI can now analyze open-ended feedback much more intelligently — do it at speed.

Today’s AI sentiment analysis platforms can automatically perform tasks like:

- Theme discovery – identifying the “what” respondents are talking about.

- Sentiment by theme – understanding how people feel about each topic.

- Actionable summaries – turning large volumes of comments into clear takeaways.

- Segmentation – analyzing feedback by property, building, region, department, manager, etc.

- Trend deltas – identifying what changed since the last cycle.

This shift is why teams are moving from “sample 200 comments” to “analyze everything.”

As Gallup notes, open-ended survey questions are powerful but often underused because analysis is time-consuming and labor-intensive; NLP methods help overcome that bottleneck and make open text more usable.

And in large-scale property feedback, “large” is not theoretical: The 2024 NMHC and Grace Hill Renter Preferences Survey Report looked at 172,703 renter responses across 4,220 multifamily properties — a volume that demands automation if you want timely insight.

But the real impact of this shift shows up when you apply it to the kinds of large, distributed feedback programs common in multifamily, commercial real estate, and employee surveys.

Why This Matters for Multifamily, Commercial, and Employee Surveys

Open-ended survey responses carry some of the most valuable operational insights — but only if you can analyze them quickly and accurately. In industries like multifamily housing and commercial property management, feedback often comes from many locations, teams, and stakeholders at once, creating both volume and complexity.

That’s why modern AI sentiment analysis is increasingly embedded directly into feedback platforms — helping organizations move from collecting comments to understanding them fast enough to act.

Multifamily Resident Surveys: Feedback tied to demand, not just service recovery

In multifamily housing, sentiment analysis in multifamily resident feedback isn’t just an internal KPI. It directly affects the top of the leasing funnel.

The Renter Preferences Survey Report found:

- 69% of renters consider reviews important when searching.

- 79% say negative reviews could deter them from touring.

This is why renter sentiment analysis has become increasingly important for property operators.

Reviews and resident feedback now influence whether prospective renters even consider touring a community. And because reviews influence leasing decisions, many operators now combine survey data with apartment review sentiment analysis to understand how residents describe their experience publicly.

That’s the business case for speed: If your feedback loop runs monthly or quarterly, you’re late.

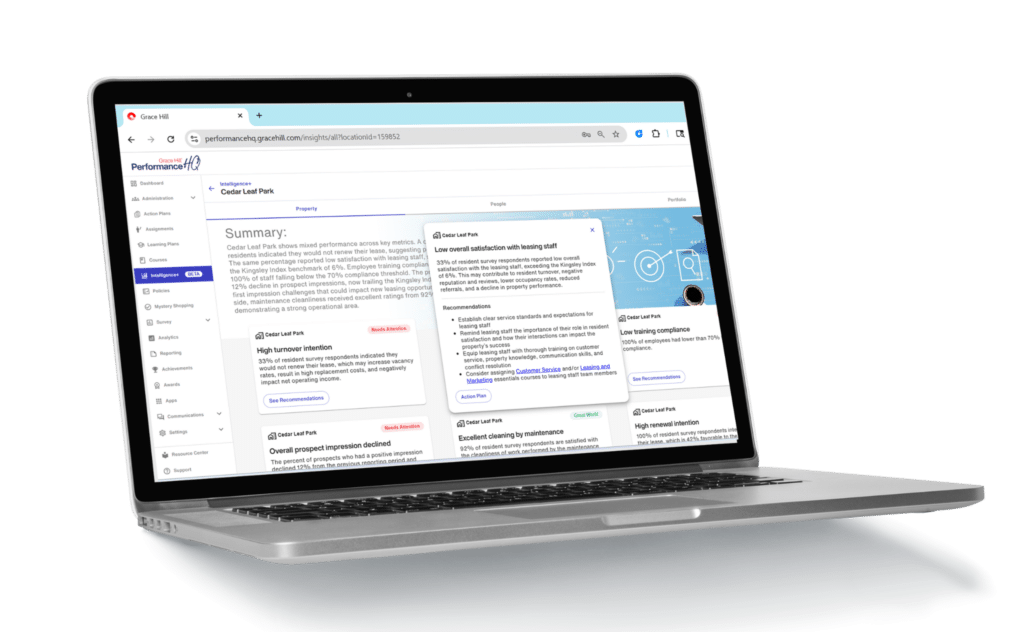

Modern survey platforms like Grace Hill’s Surveys in PerformanceHQ help operators capture resident sentiment across communities, while AI-powered tools like Intelligence+ analyze open-ended comments to quickly surface themes and sentiment trends. That makes it easier for teams to identify emerging issues — before they show up in public reviews.

➡️ Report: Measuring EliseAI’s Impact on Resident NPS with Grace Hill’s Survey Data

Commercial Surveys: Different themes, same problem

Commercial and tenant experience tends to revolve around themes like comfort, cleanliness, responsiveness, safety/security, shared-space experience, and building communication.

But the language is nuanced. Tenants might describe the same HVAC issue in different ways: “too cold in conference rooms” vs. “air feels stale.” However, how a property management team responds depends on quickly separating:

- What the issue is.

- How severe tenants perceive it.

- Where it’s happening.

- Whether it’s trending.

AI helps because it can classify at scale without flattening nuance into a single score. Platforms that combine survey collection with AI survey analysis allow operators to identify building-level issues faster and prioritize actions.

Employee Surveys: Speed builds trust

Employee feedback follows a similar pattern. Employees are more likely to believe in survey programs when leadership responds quickly and specifically. If it takes weeks to read, code, reconcile, and write up comments, the outcome tends to be:

- Broad themes

- Cautious phrasing

- Delayed “you said/we did” communication

AI doesn’t replace judgment — but it does remove the “sorting and summarizing” choke point. Tools like Intelligence+ help HR and operations leaders quickly identify themes, sentiment shifts, and emerging concerns across locations or teams, making it easier to respond while feedback is still relevant.

How To Use AI Sentiment Analysis in Surveys: A Practical Playbook

If you want AI sentiment to be fast and defensible — especially with executives — run it like a product.

- Do topic-level sentiment, not just overall positivity: A single sentiment score hides the “why” behind feedback.

- Validate on your own data, by industry and region: Multifamily comments read differently from employee feedback; commercial tenant comments read differently again.

- Keep humans-in-the-loop for edge cases: Dialect, sarcasm, and mixed sentiment need spot checks.

- Treat taxonomy as governance: Your themes are an operating system: keep them stable, versioned, and explainable.

- Report deltas, not just snapshots: “Maintenance communication down 9% WoW in Region B” drives action.

- Keep receipts: Always show example quotes behind the theme/sentiment rollups. People trust what they can read.

Key Takeaway

AI didn’t just make sentiment analysis faster — it made it operational: weekly trending, location/team segmentation, and summaries that ship while the feedback is still fresh enough to act on.

But the real advantage comes when you respect the human element, especially as it relates to language. Survey comments are local, contextual, and sometimes messy — and the systems that understand those nuances will always produce better insights.

If your AI can recognize that “pished” depends on where you learned English, then you’re already ahead of most dashboards.

FAQs About AI Sentiment Analysis for Surveys

Here are a few answers to common questions related to AI sentiment analysis for surveys.

1. How accurate is AI sentiment analysis for survey comments?

Modern AI sentiment analysis uses NLP to interpret context, tone, and relationships between words, making it far more accurate than older keyword-based methods. Accuracy improves when models are tuned to the specific language used in survey responses and include human review to validate edge cases.

2. Can AI analyze multifamily resident reviews?

Yes. AI can analyze both resident survey comments and apartment reviews to identify patterns in renter sentiment across maintenance, communication, amenities, and community experience. This type of sentiment analysis in multifamily feedback helps property teams quickly spot issues that may influence leasing demand or resident satisfaction.

3. Why does dialect matter in sentiment analysis?

Dialect matters because the meaning of words can vary by region, culture, or context. For example, words like “mad,” “sick,” or “brilliant” can signal different sentiments depending on how they’re used. Modern NLP models evaluate the surrounding context to interpret sentiment more accurately.

4. Is AI sentiment analysis better than manual coding?

AI sentiment analysis is significantly faster than manual coding, analyzing thousands of survey comments in minutes. This makes it practical to review all feedback rather than just small samples. Many organizations use AI for initial analysis and human review for interpretation, combining speed with accuracy.

Stop Guessing and Start Acting

Intelligence+ makes it easy to go from data to direction. Built into PerformanceHQ, Intelligence+ builds on a connected, prioritized view of performance, highlighting what’s working, what’s not, and what to do next.

Customer Support

Customer Support